Have you ever had to set up a meeting with a client or your co-workers who live in various time zones? Finding a time that works for everyone can be frustrating and time-consuming. You may find a time that works for your team in New York City, but attendees from Singapore are probably sleeping. Workplace inefficiencies such as this can be eliminated if your device has on-device Artificial Intelligence (AI) embedded into its chipset. Recent advancements in AI model optimization unlock a plethora of fine-tuned, contextualized applications that offer unprecedented productivity gains to users.

By shifting traditional AI and generative AI inferencing workloads onto the device, developers can enhance data privacy, decrease latency, and cut down on networking costs. These factors have historically posed challenges to the widespread adoption of AI. The result? Device refresh cycles will be reduced because AI productivity applications help justify the purchase of new PCs, smartphones, notebooks, and other personal/work devices.

On-device AI isn't just about enterprise productivity tools. It's about empowering device manufacturers and software developers to create a whole new range of consumer and enterprise-facing applications. Imagine a world where your device can help you organize your travel itinerary or manage your smart home appliances for energy usage optimization. That's the potential power of bringing AI directly on-device.

Given the transformative impact of AI devices, ABI Research foresees a bright future for AI chipset development. Heavy-hitting vendors like Qualcomm, Intel, AMD, MediaTek, and NVIDIA have demonstrated their focus on seizing this emerging opportunity.

Download the FREE Whitepaper: Assessing the On-Device Artificial Intelligence (AI) Opportunity for Enterprises and Consumers

A New Reason to Upgrade Your Devices

The average consumer views their smartphone as a device for entertainment (social media, texting, news, etc.). However, this perception will likely change with the advent of on-device AI, making the smartphone a device for both entertainment and productivity gains (e.g., scheduling get-togethers, creativity apps, etc.). To the benefit of chipset vendors and device Original Equipment Manufacturers (OEMs), this dual appeal is expected to reduce device refresh cycles from 4 to 5 years to less than 2 years.

This trend is equally true in the enterprise space, as organizations associate time savings with cost savings. If, for example, an employee can reduce time spent on administrative tasks from 2 hours to 15 minutes daily, the productivity gains are immense. Cost savings are compounded when you apply on-device AI to numerous employee devices. Recent survey results indicate that 70% of the global workforce is already open to using AI tools to minimize their workloads, making for a hassle-free transition to productivity AI.

Read More: 5 Mobile Technology Trends That Device Vendors Should Know

Mobile and PC AI Chipset Development

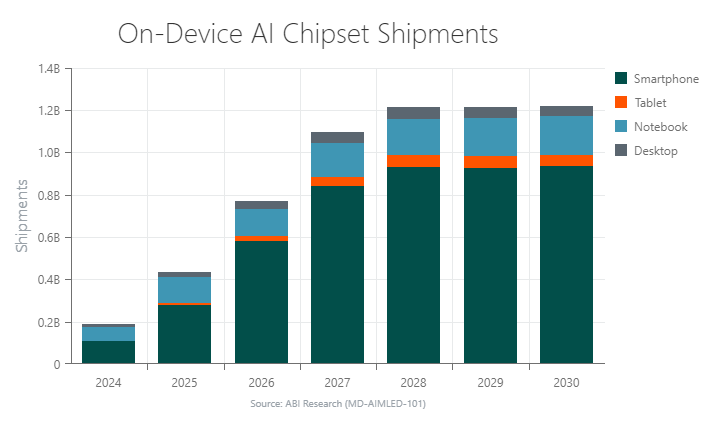

The year 2024 marks the onset of on-device productivity AI chipsets shipping for personal & work devices, with 163.3 million heterogeneous AI chipsets expected to ship. My team and I emphasize that the demand for on-device AI is most pronounced in the smartphone and PC markets. Chipsets like Qualcomm’s Snapdragon 8 Gen 3 System-on-Chip (SoC) power AI smartphones. Intel’s Core Ultra processors and AMD’s Ryzen 7000 and 8000 series chipsets are leading the way for PC AI.

As the AI device landscape matures in the coming years, annual heterogenous AI chipset shipments for personal & work devices will soar to 1.2 billion by 2030. Smartphones account for the overwhelming majority of these shipments, a number expected to reach 935 million in 2030.

While a smaller opportunity for chipset vendors, ABI Research forecasts PC AI chipset shipments (desktop, tablet, notebook) to increase from 53.8 million in 2024 to 263.1 million in 2030.

On-Device AI Requires Ecosystem Collaboration

To capitalize on the budding market for on-device AI, chipset vendors, OEMs, and Independent Software Vendors (ISVs) are establishing strategic partnerships. Such partnerships are vital for providing the hardware and software tailored for productivity AI applications.

- Qualcomm has dominated the early opportunity for AI-supported smartphones, with partners HONOR, OPPO, and Motorola leveraging Snapdragon 8 Gen 3. Qualcomm also has strong ties with Microsoft, the overseer of Windows. It’s been foreshadowed that the first iteration of Snapdragon X Elite PCs will launch around the same time as the next Windows version. Besides this, PC ISV partners such as Beatoven.ai, CyberLink, Camo, DynamoFL, ArcSoft, and Elliptic Labs are champions of running generative AI software on-device. Lastly, Qualcomm has OEM partners—Acer, HP, Lenovo, ASUS, and Samsung—which appreciate the advantages of running productivity AI apps on the device.

- Intel has lofty ambitions for growing its AI PC Acceleration Program, working alongside 100+ ISVs. Companies like Adobe, Audacity, Wondershare, and Zoom are key partners for scaling AI use cases. The PC Acceleration Program promotes synergy between Independent Hardware Vendors (IHVs) and ISVS, leveraging Intel’s AI tools and expertise. Intel’s software focus highlights the importance of productivity AI software in on-device generative AI development.

- Microsoft silicon partners AMD, Intel, and Qualcomm can unleash the potential of on-device AI with the productivity-focused Copilot platform. Running the Office 365 suite on Windows 11, Copilot can transcribe/summarize meetings and long email threads, suggest action items, match your typical writing style, identify quantitative trends in spreadsheets, and more. These features hint that Copilot is destined to permanently alter how users interact with Windows PCs. Furthermore, a productivity AI assistant is likely to be the standout feature of the physical interface of PCs. The company also integrates Copilot and other AI tools into Microsoft Edge to streamline writing processes, generate images, predict text, etc. Microsoft’s recently announced Phi-2 and Phi-3 Small Language Models (SLMs) exemplify the company’s commitment to supporting AI-based productivity apps for enterprises on end devices. If that weren’t enough, Microsoft’s partnership with Intel and Samsung to scale AI on Samsung PCs signals its long-term vision for on-device AI.

Figure 1: Microsoft Copilot on Lenovo Windows 11 Laptop

How On-Device AI Development Will Progress

As chipset vendors and software providers increasingly trend toward on-device AI development, my team and I recognize a few notable takeaways. For one thing, heterogeneous chipsets are the main technology being used to power productivity AI computing. Our forecasts indicate that heterogenous chipsets will account for 96% of total edge AI inference & training chipsets in smartphones by 2028. A heterogeneous AI chipset distributes AI workloads between the Central Processing Unit (CPU), Graphics Processing Unit (GPU), and Neural Processing Unit (NPU). The heterogeneous architecture provides significant efficiency gains compared to past AI inferencing techniques that are optimized for a dedicated processing engine.

Another area to consider is the size of AI learning models. Most of the hype around generative AI has been centered around Large Language Models (LLMs). For example, GPT-3—arguably the main catalyst of widespread awareness of generative AI—is trained on 175 billion parameters. However, chipset developers like Intel see tremendous value in smaller AI models that contain fewer than 15 billion parameters. These compressed models translate to performance breakthroughs and cost savings (i.e., no networking costs) for productivity AI on end-user devices. Also recently developed are tiny language models (sub-3 billion parameters) that are well-suited for narrower applications on resource-constrained “legacy” devices. For example, Apple’s recently released OpenELM AI language models range from 275 million to 3 billion parameters. Available on the Hugging Face Hub, these open-sourced models present a great opportunity for developers to collaborate and push for greater AI innovation.

Finally, app developers require innovative software solutions that support creating productivity AI use cases. To make this happen, ABI Research asserts that chipset vendors have a key role to play. Important software considerations for on-device AI development include:

- Machine Learning (ML)-based optimization tools

- Open-source models and optimization tools

- Software that promotes hardware interoperability

- Software Development Kits (SDKs) to accelerate AI processing and tailoring applications to novel chip architectures

Learn more about how chipset vendors and software players are approaching on-device AI development in ABI Research’s whitepaper, Assessing the On-Device Artificial Intelligence (AI) Opportunity for Enterprises and Consumers. This content is part of our AI & Machine Learning Research Service.

Larbi Belkhit

Larbi Belkhit

Malik Saadi

Malik Saadi