Machine Learning (ML) is increasingly being integrated into edge computing solutions. ML at the edge improves the user experience via latency reduction, near-real-time data analysis, privacy, and security. Vendors are stepping up to the plate to provide services and platforms that streamline processes and reduce the time to market. In this post, you'll read about five examples of edge ML to better conceptualize this helpful combination.

What's Causing the Demand for Machine Learning on the Edge?

The diverse nature of edge ML, data privacy regulations, lack of valuable big data, and high development costs are the main drivers for adopting innovative edge ML solutions.

Most technologies that address edge ML computing challenges involve data management, raw data analysis, feature engineering, inference engine generation, visualization, deployment, monitoring, and evaluation.

Edge ML Market Snapshot

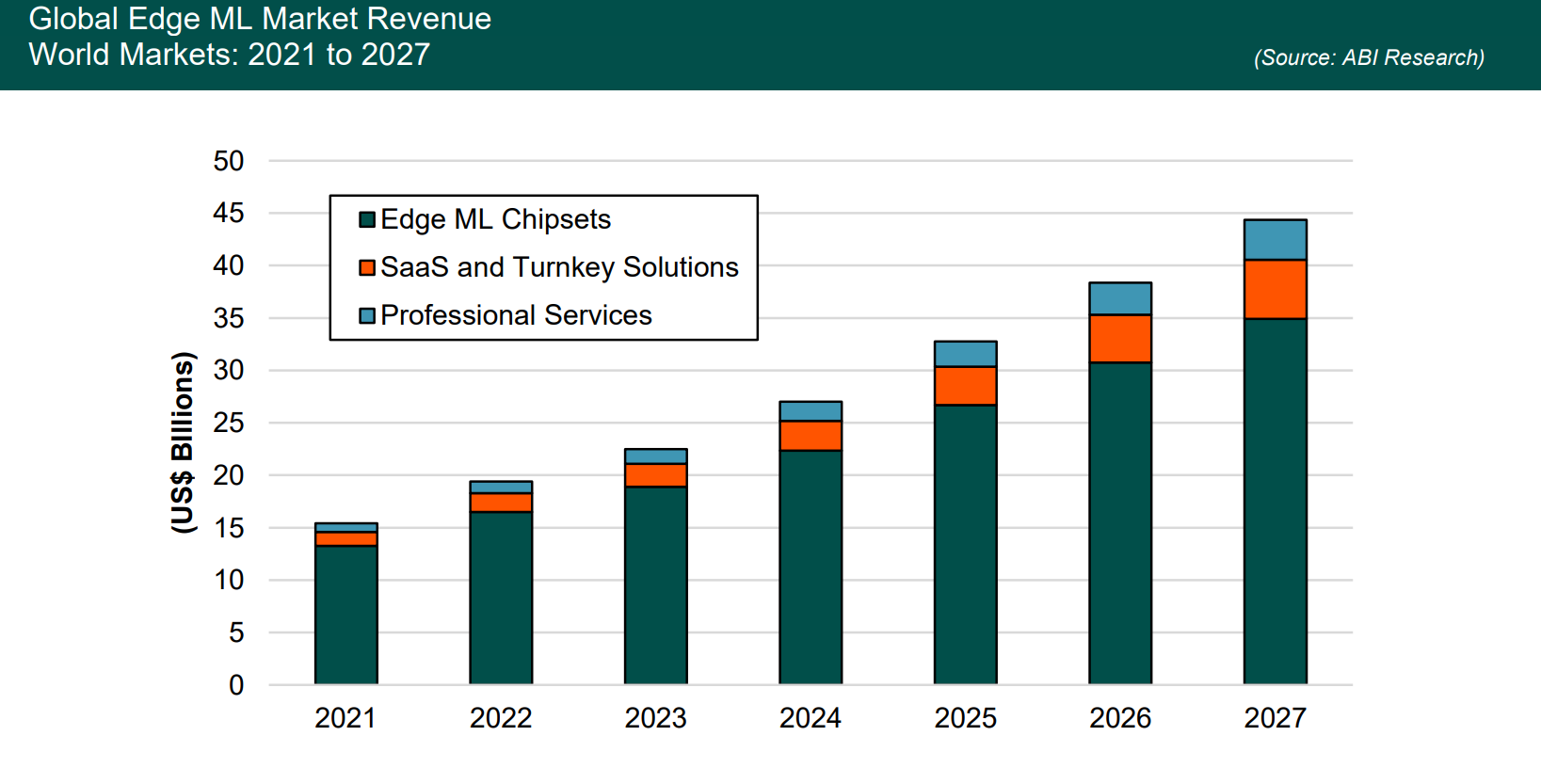

Edge machine learning vendors offer their services via Software-as-a-Service (SaaS) and turnkey solutions. The global edge ML market will grow at a Compound Annual Growth Rate (CAGR) of 26% between 2022 and 2027, reaching US$5.7 billion by the end of ABI Research's forecast period.

By 2027, SaaS and turnkey solution providers of edge ML will represent 13% of the total market, dominated by chipset vendors.

Edge ML Example #1: Amazon SageMaker Neo

Amazon Web Services (AWS) offers a platform called SageMaker Neo, which helps developers enhance models for edge ML devices. Whereas developers typically spend much time optimizing the cloud and edge devices, SageMaker Neo automates the process with high precision.

The user chooses a machine learning model already trained in SageMaker Neo, then picks a target hardware platform. With one click, SageMaker Neo applies the combination of optimizations that squeeze out the most performance for the ML model on the cloud instance or edge device. Amazon SageMaker Neo leverages Apache TVM and partner-provided compilers and acceleration libraries to make this all possible.

Edge ML Example #2: Microsoft Azure

Like AWS, Microsoft uses its cloud-based platform, Azure, to provide machine learning on the edge for enterprise customers. Data is collected from an Azure IoT Edge device on the Azure Stack Edge, designed to enhance on-device ML inference.

Microsoft’s edge ML solution relies on other Azure platforms like Azure Machine Learning, Azure Container Instances, Azure Kubernetes Services (AKS), and Azure Functions. Using edge computing acceleration hardware, local machine learning models can be compared for processing data on-premises for greater reliability.

Edge ML Example #3: Latent AI

Unlike AWS and Microsoft Azure, Silicon Valley-based Latent AI focuses solely on providing solutions for edge devices, frameworks, and Operating Systems (OSs). Latent AI Efficient Inference Platform (LEIP) Recipes optimize an ML model by combining instructions with pre-configured assets, enabling accurate executables like object detection.

According to the company’s website, LEIP Recipes can reduce the time to market by 10X. What once took a machine learning engineer three days to do (ML model formatting) only takes a few hours to complete. Latent AI Recipes, as they are cleverly called, are optimized for power, latency, and memory for specific ML models in edge computing contexts.

Edge ML Example #4: Blaize’s AI Studio

Blaize’s cloud-based AI Studio is the first open Artificial Intelligence (AI) no-code platform designed for edge computing management and Machine Learning Operations (MLOPs) workflow. Particularly interesting is the marketplace feature that allows developers to access resources in a timely fashion instead of spending days chasing them down.

Automatic edge-aware optimization models come in various packages, enabling developers to choose ML models that are tailor-made for their unique needs. Blaize’s edge ML platform allows enterprises to reach Return on Investment (ROI) more quickly as the platform significantly cuts down on time to deployment.

Read our 2023 technology trends whitepaper.

Edge ML Example #5: Edge Impulse

Edge Impulse introduced a proprietary compiler in 2020 called Edge Optimized Neural (EON), designed to optimize large machine learning models for resource-limited edge devices. ML models can reach optimal performance levels with less memory and storage via Software Development Kits (SDKs) and libraries that assist with quantization.

Edge Impulse also offers a tool called EON Tuner, which detects the best ML models for a target device and performs end-to-end optimization.

Another great feature of Edge Impulse’s solution is the ability to simulate hardware performance before deploying an edge device and dissect the accompanying metrics. This feature allows users to apply machine learning model changes to all edge devices whenever they want. The only requirement is that all the computing is done over the cloud.

Winning Formula for Edge ML

Vendors providing edge ML enablement must develop a flexible computing platform with plenty of learning resources for enterprise users. At the same time, enabling a broad range of hardware interoperability with the platform is a crucial predictor of customer acquisition. Additionally, no-code and low-code solutions are highly desirable, as they considerably reduce the time to market for organizations with talent-diverse teams.

To learn more about machine learning on the edge, download ABI Research’s Edge ML Enablement: Development Platforms, Tools, and Solutions research analysis report. This research is part of the company’s AI & Machine Learning Research Service.

Paul Schell

Paul Schell

Larbi Belkhit

Larbi Belkhit

Malik Saadi

Malik Saadi