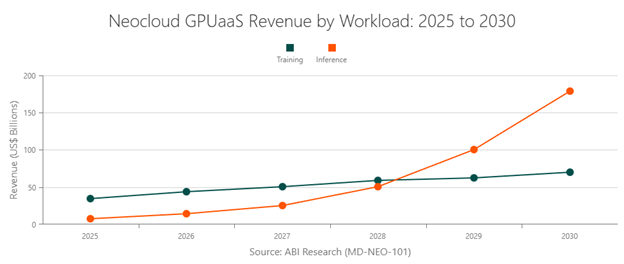

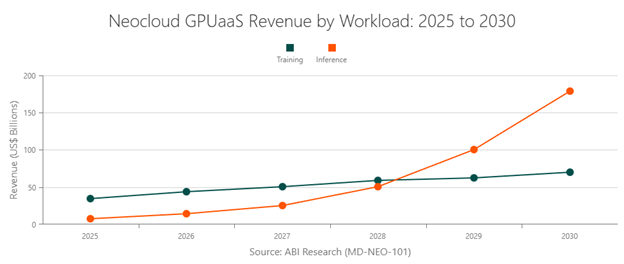

ABI Research projects that Graphics Processing Unit-as-a-Service (GPUaaS) revenue from neoclouds will surge from US$42 billion in 2025 to US$250 billion by 2030. Enterprises are rapidly partnering with neocloud providers to meet next-generation Artificial Intelligence (AI) workloads. Compared to traditional hyperscalers (Amazon Web Services (AWS), Azure, Google Cloud), neoclouds offer better price transparency, regional compliance, and specialist services.

While the appeal is evident, not all neocloud platforms are built to support the scale, performance, and reliability needed for modern AI infrastructure. The Chief Technology Officer (CTO)/Chief Information Officer (CIO) neocloud buyer checklist should include:

- Resilient hardware & supply chain strategy

- Large-scale distributed GPU cluster capability

- Advanced AI optimization stack

- Integrated cloud, data & AI platform services

- Robust software co-development

- High-performance interconnect & network topology

Beyond Graphics Processing Unit (GPU) accessibility, a neocloud partner must have a diverse hardware supply chain strategy, a distributed GPU cluster scale, and high-performance interconnect bandwidth. This ensures a predictable supply of essential technologies (e.g., chipsets) and the ability to run large-scale training workloads in the cloud.

Other critical features to look for in your neocloud partner include AI optimization efficiencies, close ties to software suppliers (for co-development), and support for adjacent services across cloud, data, and AI. Neoclouds possessing these features help enterprises accelerate AI deployments, reduce complexity, and minimize costs.

That last point is especially relevant. According to Flexera’s 2026 State of the Cloud Report, cost-efficiency/savings is the top metric that enterprises prioritize when gauging progress toward their cloud goals (81% of survey respondents). The right neocloud partner will help drive both infrastructure and operating costs down over time.

This resource outlines a clear framework for evaluating neocloud partners. ABI Research’s expert-led analysis identifies six criteria that make a neocloud not just a compute provider, but a partner that delivers measurable business impact.

The table below summarizes our study. The rest of the article goes in-depth on how CTOs and CIOs should approach the neocloud selection process.

Neocloud Partner Evaluation Checklist: What to Look for and What to Avoid

(Source: ABI Research)

|

Criterion

|

What to Look for

|

Red Flags

|

|

Hardware & Supply Chain Strategy

|

Reliable access to AI hardware

|

Limited or uncertain supply

|

|

Max Distributed Cluster Scale

|

Ability to support large AI workloads

|

Small-scale or unclear limits

|

|

AI Optimization Stack Maturity

|

Software that improves performance and efficiency

|

Basic or missing optimization tools

|

|

Adjacent Cloud, Data & AI Services

|

Integrated tools beyond compute

|

Fragmented or DIY setup required

|

|

Partner & ISV Co-Development

|

Strong ecosystem and real-world solutions

|

Limited partnerships or use cases

|

|

Interconnect Bandwidth & Topology

|

Fast, efficient GPU communication

|

Network bottlenecks or instability

|

What is a Neocloud?

A neocloud is a highly specialized cloud service provider disrupting the tech industry. These companies differentiate themselves by supporting specific niches like AI-centric infrastructure, sustainability, edge computing, sovereign cloud, developer friendliness, and cost efficiency. In many ways, neoclouds are a mirror reflection of the evolving compute needs of technology decision makers.

More and more organizations are strongly considering partnerships with neocloud providers, if they haven’t already. However, this is still a relatively new concept in the enterprise world. To help CIOs and CTOs choose the right neocloud partner, this buyer's guide identifies six essential features to evaluate in the decision-making process.

Key Insights:

- Neoclouds are becoming essential for AI infrastructure. Enterprises are adopting them for better pricing transparency, flexibility, and support for specialized AI workloads.

- Hardware access and supply chain strength are critical. Providers must ensure reliable GPU availability through diverse sourcing and strong vendor partnerships.

- Scalable GPU clusters enable advanced AI workloads. Large, distributed clusters are required to support complex training and high-performance computing use cases.

- Software and optimization layers drive efficiency. Mature AI stacks improve performance, reduce training time, and lower overall costs.

- End-to-end platforms and ecosystems add real value. The best neoclouds combine integrated services, strong software partnerships, and high-performance networking to support full AI workflows.

The Checklist for Evaluating Neocloud Providers

- Resilient hardware & supply chain strategy

- Large-scale distributed GPU cluster capability

- Advanced AI optimization stack

- Integrated cloud, data & AI platform services

- Robust software co-development

- High-performance interconnect & network topology

When Do You Need a Neocloud Partner?

A neocloud provider is a strong option for organizations with unique requirements or those looking beyond traditional hyperscalers (AWS, Azure, Google Cloud). While nobody beats hyperscalers in scale, neoclouds deliver a level of region-specific compliance, price transparency, and flexibility that general-purpose clouds currently cannot match (learn more in our Research Highlight, Three Gripes Driving Enterprises from Hyperscalers to Neocloud Providers).

Companies typically select a neocloud when they aim to close gaps in their cloud strategy. Common reasons for the increase in neocloud collaborations include:

- Targeted, real-time AI/Machine Learning (ML) applications

- Specialized GPU infrastructure

- Geographic data sovereignty

- Economic strain

- Improved interoperability with existing cloud services

- Strong developer experience

The rest of this guide provides a framework for CIOs and CTOs actively researching potential neocloud partners.

Six Innovative Features to Look for in a Neocloud Partner

ABI Research’s Neocloud Providers competitive ranking used six critical innovation criteria to score vendors. The following checklist is foundational to any reliable and long-term partner.

Neocloud Partner Evaluation Checklist: What to Look for and What to Avoid

(Source: ABI Research)

| Criteria |

What to look for |

Red flags |

| Hardware & supply chain strategy |

Multiple hardware vendors, strong chipmaker partnerships, long-term supply agreements, global data center presence |

Reliance on a single supplier, unclear sourcing, limited regional availability |

| Max distributed cluster scale |

Ability to support hundreds or thousands of GPUs in a single workload, proven large-scale deployments |

Low GPU limits, unclear scaling capabilities, lack of real-world large cluster examples |

| AI optimization stack maturity |

Advanced software that improves performance (e.g., faster training, stable jobs), easy scaling tools

|

Minimal software layer, frequent job failures, no clear performance gains |

| Adjacent cloud, data & AI services |

Integrated tools for data pipelines, model deployment, and monitoring |

Requires multiple third-party tools, fragmented workflows, high integration effort |

| Partner & ISC co-development |

Active ecosystem of partners, industry-specific AI solutions, co-developed use cases |

Limited partnerships, generic offerings, no vertical-specific solutions |

| Interconnect bandwidth & topology |

High-speed networking (e.g., InfiniBand), low latency, efficient multi-GPU communication |

Slow or inconsistent performance at scale, networking bottlenecks, poor |

Resilient Hardware & Supply Chain Strategy

Technology decision makers should ask themselves: does this neocloud have a diverse range of supply chain partners? Many organizations turn to neocloud providers to avoid the constraints of hardware lock-in within hyperscale data center environments.

The last thing you want to do is follow the same path that incited you to seek an alternative cloud service provider in the first place. Therefore, make sure that your neocloud provider has extensive relationships with hardware manufacturers (accelerators, chipsets, servers, etc.).

It’s also crucial that the vendor has long-term agreements in place with said suppliers. Given the current geopolitical turbulence, supply chains can quickly become disrupted. A neocloud provider with diverse sourcing gives organizations greater confidence in consistent compute availability.

Example

Nscale has a vertically integrated supply chain model that enables it to deliver cloud services on a global scale. It signed one of the largest AI infrastructure contracts ever with Microsoft in October 2025. This expanded deal will see about 200,000 NVIDIA GPUs shipped to data center campuses in Europe and North America.

Large-Scale Distributed GPU Cluster Capability

Next, how many GPUs can the neocloud provider combine to run a single distributed AI training job? ABI Research projects a 68% Compound Annual Growth Rate (CAGR) in training chipset shipments between 2024 and 2031, based on our Artificial Intelligence and Machine Learning: Edge AI market data. Moreover, training workloads dominate neocloud GPUaaS revenue today (inference will dominate later in the decade).

Effective large-scale AI deployments require tightly integrated clusters of hundreds to thousands of GPUs. If your neocloud partner lacks this scale, then your Information Technology (IT) teams will be limited in how quickly they can innovate AI implementations. On the contrary, expansive GPU clusters empower teams to spend more time on more fruitful tasks.

Example

CoreWeave’s marketing materials indicate that its single clusters can scale more than 100,000 GPUs. Key to this capability are strategic partnerships with NVIDIA (GB200 NVL72 systems) and IBM (“Granite” Large Language Models (LLMs)).

Advanced AI Optimization Stack

Technology leaders should also assess a neocloud’s AI optimization capabilities. Ideally, an optimization stack should improve throughput by 20% to 40% without switching hardware.

Consider this scenario: you are comparing two potential neocloud partners that have similar GPU clusters. However, one of them integrates a software layer that reduces training time by 10% more. This should be a key priority in the purchase decision (while factoring in the other features).

The result is faster, cheaper, and more reliable AI rollouts.

Example

San Jose, CA-based Lambda’s AI optimization capabilities are one of the best recognized in ABI Research’s neocloud competitive assessment. It mostly relies on third-party software such as CUDA, drivers, PyTorch, TensorFlow, and other tools. These integrations translate to simple, “one-click clusters” for customers.

Integrated Cloud, Data & AI Platform Services

A neocloud provider must go beyond raw computing power. Reliable partners will also offer adjacent services across cloud, data, and AI. In other words, it’s an all-encompassing platform where you have everything you need in one place. There is no need to stitch together multiple tools into your AI infrastructure yourself.

Such integrated services help create seamless data-to-AI workflows, accelerating deployment and enabling Machine Learning Operations (MLOps) across the organization.

Example

Ranked second in our assessment, Nebius offers comprehensive neocloud services. Besides it impressive GPUaaS solutions, the Dutch company offers:

- Managed MLflow, managed PostgreSQL, and fully managed Kubernetes

- Enterprise-grade inference services through Token Factory

- Dedicated endpoints with a 99.9% Service Level Agreement (SLA)

- Predictable low-latency scaling

- Zero-retention execution modes for enhanced data control

Robust Software Co-Development

Ultimately, the cloud is used to develop new AI capabilities for specific industries. Neoclouds cannot do this with hardware and third-party integrations alone. For this reason, it is critical to select a provider that builds strong partnerships with enterprise Software-as-a-Service (SaaS) providers and Independent Software Vendors (ISVs).

These collaborations demonstrate that the neocloud can successfully create novel AI-powered business applications. Leading neocloud providers partner with software players to fine-tune platforms for manufacturing, healthcare, finance, retail, and other verticals. In turn, innovation cycles are shortened and your team can start seeing AI infrastructure investments convert to measurable value quickly.

Example

Nebius received one of the highest scores from ABI Research for software co-development. First, the neocloud provider has strong ties with two tech juggernauts: Microsoft and NVIDIA. Case in point, Nebius is considered an Examplar Cloud Provider by NVIDIA, something that is only reserved for companies delivering top-tier compute performance on NVIDIA GPUs.

Secondly, Nebius’s Token Factory supports popular LLM software families such as Llama, DeepSeek, Gemma, Mistral, NVIDIA Nemotron, and GPT-OSS. Customers can also host their own LLMs within the neocloud platform to develop AI applications. This LLM diversity affords organizations many options when it comes to how they approach the development process.

Finally, Nebius’ cloud solutions can integrate a wide range of software sources:

- Hugging Face

- SkyPilot

- Dstack

- Run:ai

- MLflow/Kubeflow

- JupyterHub

High-Performance Interconnect & Network Topology

Finally, a neocloud partner must be able to support High-Performance Computing (HPC) by investing in significant interconnect bandwidth. It’s advisable to measure quality based on InfiniBand, advanced Ethernet, or similar interconnect standards.

Current and future AI model training will depend on distributed workloads spanning multiple GPUs and nodes. These compute-hungry applications rely on reliable, low-latency communication to deliver HPC. A high-performance interconnect and network topology is critical to running complex models efficiently and scaling AI infrastructure.

Example

ABI Research scored Nscale a 9/10 for interconnect bandwidth and topology. This top-ranked neocloud combines InfiniBand, high-bandwidth Ethernet with Remote Direct Memory Access (RDMA), and NVLink/NVSwitch for NVL72 systems. These interconnections are further supported by joint innovation with Nokia across switching and optical layers. Nscale’s approach to HPC and interconnect architecture reflects three key themes:

- Fabric optimized for collectives

- Non-blocking design

- High bandwidth/low latency

From Infrastructure to Impact: Making Neocloud Work for Your Business

Choosing a neocloud partner is one of the most consequential decisions that CTOs and CIOs can make in 2026. As our buyer guide posits, the right provider can help enterprises expand their AI infrastructure in a cost-effective and developer-friendly manner. Neoclouds also often hold the keys to complying with region-specific regulations.

However, not all neoclouds are created equal. Enterprises should look for neocloud partners that combine reliable hardware access, large-scale compute capabilities, and high-performance networking with strong software optimization and integrated AI services. Equally important, your neocloud provider must actively collaborate with software suppliers and support end-to-end AI workflows. With these key capabilities, your team can enable faster, more scalable, and more practical AI deployments.

For further advisory on the neocloud market, check out the following ABI Research resources:

Frequently Asked Questions

How do you choose the right neocloud provider for AI workloads?

Choosing the right neocloud provider comes down to evaluating whether it can support large-scale AI workloads with reliable performance. Key factors include access to GPUs, the ability to scale distributed clusters, and strong interconnect performance for fast data processing. Enterprises should also ensure the provider can deliver consistent compute availability through a resilient hardware and supply chain strategy.

What features should CTOs and CIOs look for in a neocloud partner?

Technology leaders should prioritize six core areas: hardware supply chain strength, large-scale GPU cluster capability, AI optimization software, integrated cloud and data services, strong software partnerships, and high-performance networking. These features ensure that AI workloads run efficiently, scale effectively, and integrate smoothly into existing systems. A well-rounded neocloud partner goes beyond compute to support end-to-end AI deployment.

Why are enterprises choosing neocloud providers over hyperscalers?

Enterprises are turning to neocloud providers because they offer more flexibility, price transparency, and region-specific compliance than traditional hyperscalers. They are particularly attractive for specialized AI and ML workloads that require tailored infrastructure. Neoclouds also help organizations close gaps in their cloud strategy by providing developer-friendly environments and better interoperability with existing systems.